Claude Code Leak - The Most Interesting Things People Found in Anthropic’s Exposed Code

When Anthropic accidentally exposed internal Claude Code source through a packaging mistake, the headline was obvious: a major AI coding tool had leaked. But the more interesting story came next. Developers, researchers, and curious users immediately started digging through the code to see what it revealed about Anthropic’s product direction, internal experiments, and how top AI coding agents are actually being built. Anthropic said the incident was caused by human error, not a breach, and that no customer data or credentials were exposed.

That is why this story matters more than simple tech gossip.

The leaked Claude Code files reportedly gave people a look at over 512,000 lines of code, enough for outsiders to spot unfinished features, internal comments, tool structures, memory ideas, and product experiments that Anthropic likely never intended to share publicly.

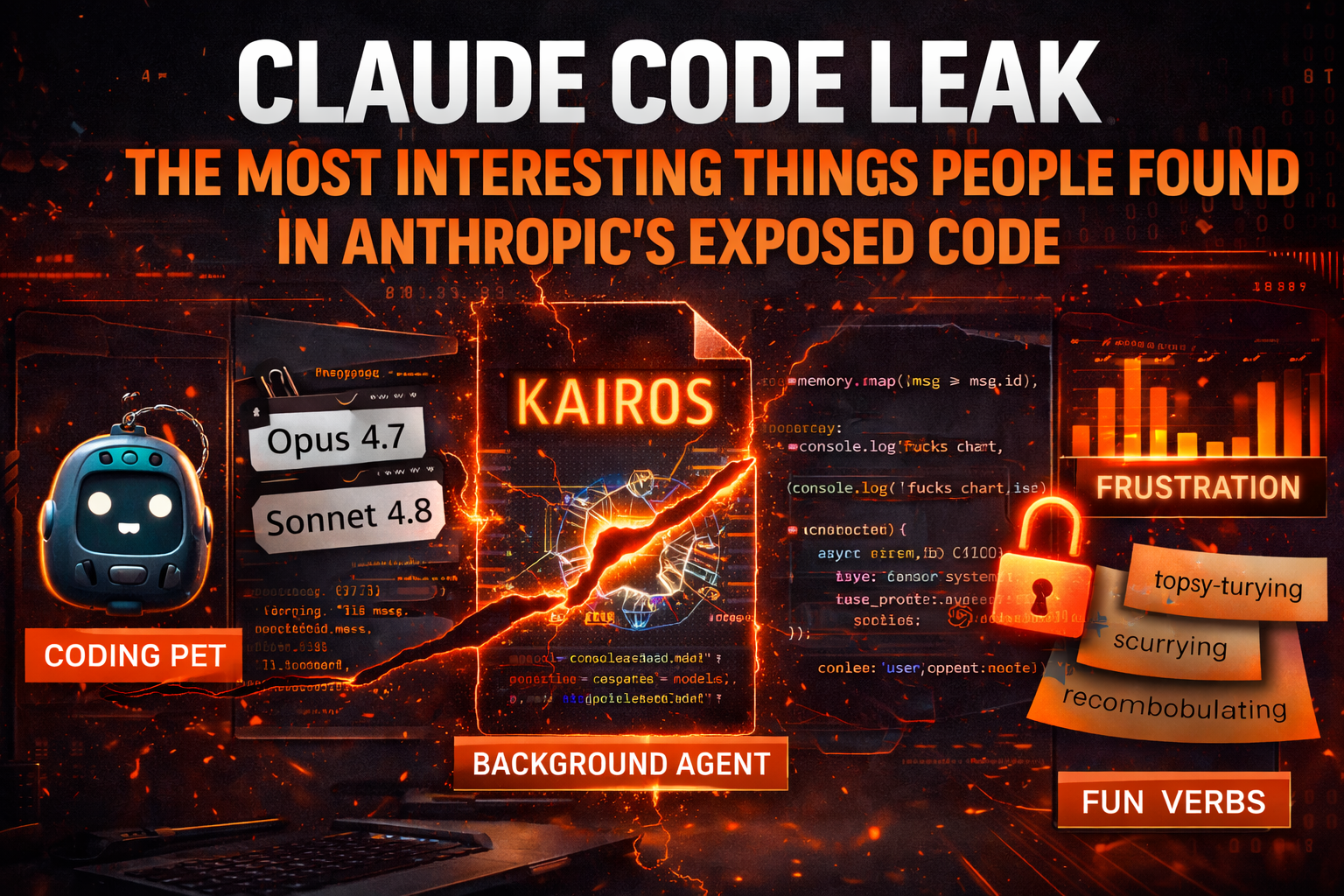

1. A Tamagotchi-style coding pet

One of the most shared discoveries was surprisingly playful.

People digging through the leaked code said they found references to a Tamagotchi-like pet that would sit beside the input box and react while you code. The Verge reported that users pointed to this as one of the more unusual unreleased experiments inside Claude Code, while Business Insider noted that the feature was widely discussed as a “coding pet.”

That matters for one reason in particular.

It shows Anthropic is not only thinking about raw functionality. It is also experimenting with emotional or companion-style interface layers around coding agents. That may sound small, but product design details like this often reveal how AI companies think about engagement, habit formation, and making tools feel more alive. This part is an inference from the kinds of experiments reported, not a confirmed Anthropic product plan.

2. KAIROS - the hint of an always-on background agent

Another heavily discussed finding was something called KAIROS.

The Verge reported that users said the leaked code referenced a KAIROS feature that could enable an always-on background agent, while Business Insider described users interpreting it as an agent that runs continuously, keeps a daily log, and acts like an “all-knowing teammate who notices and handles everything” before being asked. Business Insider also reported that Claude Code creator Boris Cherny publicly commented that Anthropic is “always experimenting” and that many tests never ship.

This is one of the biggest signals in the whole leak.

If the interpretation is broadly right, it suggests Anthropic is thinking beyond “assistant waits for prompt” and toward persistent agents that keep context, watch workflows, and act proactively. That is a much bigger product category than a chatbot in a terminal.

3. Hints about Claude Code’s memory architecture

Another reason people cared so much about the leak is that it appeared to offer clues about memory.

The Verge reported that users said the exposed code gave insight into Claude Code’s “memory” architecture, and Business Insider said researchers saw the leak as a rare look at how Anthropic approaches key agent problems like memory. One AI research scientist quoted by Business Insider said the main takeaway was not that Anthropic had been massively breached, but that outsiders got an unusually clear look at where AI coding agents are going.

This may be the most strategically important part.

Memory is one of the hardest and most commercially valuable pieces of modern AI agents. A better memory system can make an assistant feel more useful, more contextual, and more like a real teammate instead of a stateless chatbot. So even partial clues about how Anthropic structures that layer are meaningful to competitors and serious developers. That conclusion is an inference based on the reporting about memory-related findings.

4. Unreleased model names and internal codenames

People also shared references to what looked like unreleased models and internal codenames.

Business Insider reported that users screenshot references to Opus 4.7, Sonnet 4.8, and codenames like Capybara and Tengu. That does not mean all of those names represent public launch plans, but it does show that the leaked code appears to have exposed a layer of Anthropic’s internal experimentation and naming conventions.

This is exactly the kind of thing the internet loves because it feels like roadmap archaeology.

But it is also genuinely useful. Even loose signals about naming, version progression, and internal testing can help people understand how quickly a company is iterating and what kinds of future releases may be under consideration.

5. The famous “spinner verbs”

Not all the interesting details were serious.

Business Insider reported that users found a list of whimsical “spinner verbs” in the leaked code, including words like scurrying, recombobulating, and topsy-turvying. It became one of the lighter viral findings because it gave people a funny glimpse into the tone and personality choices inside the product.

This kind of detail matters more than it seems.

When AI tools become widely used, tiny touches like loading language, interface humor, and product voice help shape how people emotionally experience the system. These are small UX choices, but they are part of what makes a product feel memorable instead of interchangeable. That is an inference from the reported examples.

6. Analytics around user frustration

One of the more revealing findings had nothing to do with model architecture and everything to do with product measurement.

Business Insider reported that users said Claude logs swear words as a negative signal, and that Boris Cherny responded by saying these signals were used to measure whether users were having a good experience. Cherny reportedly said the team put this on a dashboard and called it the “fucks chart.”

This is actually a very interesting product clue.

It suggests Anthropic is monitoring emotional frustration as a real product metric, not just technical latency or raw task completion. In other words, the company appears to care about how annoyed users get during coding sessions, which makes sense for a tool trying to become a daily engineering companion.

7. Internal comments that made the tool feel more human

Leaks often become compelling when they expose not just systems, but the people behind them.

The Verge reported that users highlighted an internal coder comment saying that a certain memoization approach increased complexity a lot and that the person was not sure it really improved performance. That line spread because it made one of the world’s most talked-about AI coding products feel suddenly human and normal: messy tradeoffs, uncertainty, imperfect engineering judgment.

That matters because it punctures the myth that elite AI products are built through magical certainty.

They are still built by teams making guesses, compromises, and experiments under pressure, just like the rest of the software industry.

8. The speed at which people recreated and spread it

A huge part of the online reaction was not only what the code contained, but how fast people acted on it.

Business Insider reported that once the leak was noticed, people rapidly mirrored the files, discussed them in Discord servers, and even recreated the tool in Python. The same report said a reproduction called Claw Code exploded in popularity, and that Anthropic initially pushed broad takedown requests before narrowing them. The Guardian also reported that the code spread quickly to GitHub and that rewritten versions moved extremely fast online.

That may be the deepest lesson in the whole event.

In modern AI, once a meaningful product leak hits the public internet, containment gets very hard very quickly. This was not just a source exposure story. It was a live demonstration of how fast developers can copy, analyze, repackage, and redistribute valuable software now.

What this reveals about where AI coding tools are heading

If you step back from the leak drama, the findings point in a clear direction.

The most-discussed discoveries were not about static autocomplete. They were about memory, persistent agents, interface personality, workflow monitoring, and systems that feel more proactive than reactive. The Verge and Business Insider both frame the leak as giving outsiders a rare look into Anthropic’s thinking about the future of coding agents.

That is the real signal.

The future AI coding race may not be won by whoever has the prettiest demo or the best one-off benchmark. It may be won by whoever builds the most useful long-running work companion: something that remembers context, notices problems, reacts intelligently, and fits naturally into a developer’s daily environment. This is an inference from the reported experimental features and commentary.

Final thoughts

The leaked Claude Code files were embarrassing for Anthropic, but they were also fascinating for the rest of the industry.

People online were not only looking for scandal. They were looking for clues. And the clues they think they found - the coding pet, KAIROS, memory hints, unreleased model names, frustration dashboards, funny spinner verbs, and candid engineering comments - paint a picture of a company experimenting aggressively with what an AI coding agent could become.

So the most interesting part of the leak may not be that source code got out.

It may be that, for a brief moment, the industry got to see where one of the top AI coding teams appears to believe the category is heading.