Claude Code Just Got Agent View. This Is What AI Coding Is Turning Into

AI coding is moving past the chat window.

For the last two years, most developers have used AI coding tools in a very simple way.

Open the tool. Ask for help. Review the answer. Copy the code. Run the code. Fix the errors. Repeat.

That workflow was useful. It made developers faster. It helped with debugging, boilerplate, refactoring, and learning unfamiliar APIs.

But it was still mostly one developer working with one AI conversation at a time.

Claude Code’s new Agent View points to a different future.

Not one AI assistant sitting beside you.

Multiple AI coding agents running in parallel, each working on a different task, while the developer moves into more of a reviewer, planner, and technical director role.

That is the real story.

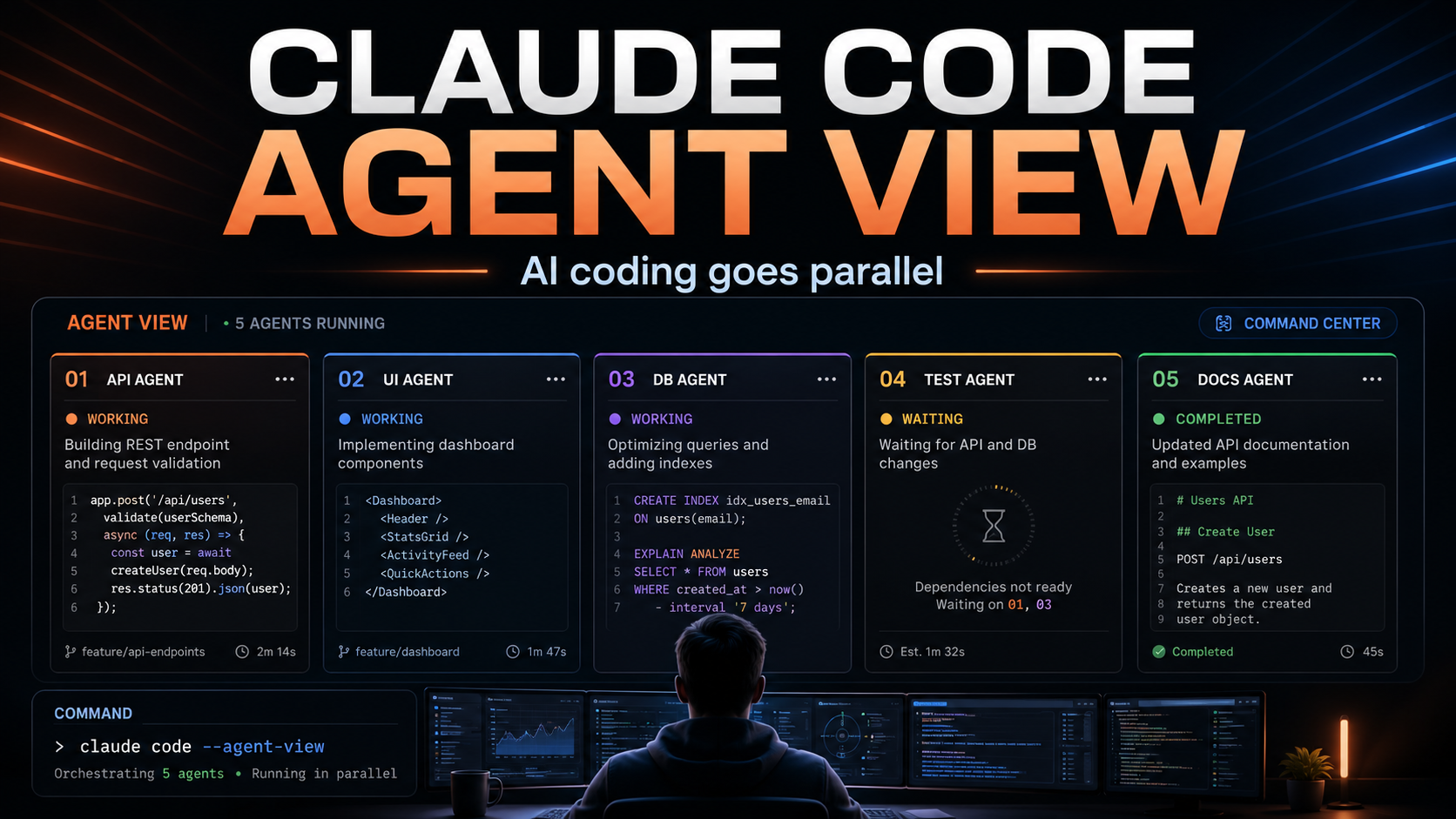

What Claude Code Agent View actually is

Anthropic introduced Agent View in Claude Code as one place to manage multiple Claude Code sessions.

Instead of opening several terminal tabs, using a tmux grid, or trying to remember which agent is doing what, Agent View gives developers a single screen where they can see their active sessions.

One session might be fixing a bug.

Another might be investigating a failing test.

Another might be reviewing a pull request.

Another might be updating a dashboard.

Another might be exploring a new implementation idea.

The developer can see which sessions are still working, which ones are waiting for input, which ones are done, and which ones failed.

That sounds like a small interface improvement.

It is not.

It changes the mental model of AI-assisted development.

From AI pair programmer to AI task manager

The first generation of AI coding tools felt like pair programming.

You asked a question. The AI answered. You stayed in the loop at every step.

That is still useful, especially for complex work where judgment matters.

But Agent View moves closer to a task management model.

You are not just chatting with Claude.

You are dispatching work.

You are checking status.

You are stepping in only when a decision is needed.

You are reviewing outputs instead of manually driving every line of code.

That is a much bigger shift than “better autocomplete.”

It is closer to having a small team of junior developers or assistants working in parallel, except they are software agents.

And like any junior team, they still need direction, review, testing, and judgment.

Why this matters for real software work

Most software work is not one clean task.

A real development day looks more like this:

Fix this broken integration.

Check why this build failed.

Review these changes.

Update this component.

Investigate a bug that only appears in production.

Write tests for the new function.

Clean up old code.

Check whether this library update breaks anything.

Before AI agents, a developer had to move through these tasks one by one.

With parallel agent sessions, some of this work can happen at the same time.

That does not mean the developer disappears.

It means the developer’s bottleneck changes.

The bottleneck becomes less about writing every implementation detail manually and more about deciding what should be done, how it should be tested, what tradeoffs are acceptable, and whether the final result is good enough to ship.

That is a different job.

Still technical, but more orchestration-heavy.

The developer becomes the reviewer

This is the part many people underestimate.

AI agents do not remove the need for developers.

They increase the importance of developers who can review.

A coding agent can produce a pull request.

But someone still needs to ask:

Does this solve the actual problem?

Did it introduce a hidden bug?

Is the architecture still clean?

Is the security model safe?

Will this scale?

Does this match the product direction?

Is this overengineered?

Is the code maintainable?

Agent View makes it easier to run more AI work in parallel, but that also means more output to review.

The faster the agents become, the more valuable good technical judgment becomes.

This is why experienced developers, agencies, and technical founders may benefit more from these tools than beginners.

A beginner may generate a lot of code and not know what is wrong with it.

An experienced developer can generate a lot of code and quickly separate useful work from dangerous work.

Why agencies should pay attention

For web development agencies, this kind of tooling matters a lot.

Agency work is full of parallel tasks.

One client needs a Shopify fix.

Another needs a WordPress update.

Another needs a Webflow animation issue checked.

Another needs an API integration debugged.

Another needs a performance pass.

Another needs content migrated.

Traditionally, agencies either hire more people or accept that work moves sequentially.

AI agent workflows create a third option.

A developer can split work into smaller, independent tasks and let agents investigate, draft, test, or prepare changes in parallel.

That does not replace the agency.

It changes what a strong agency can deliver.

The agency still needs client communication, strategy, quality control, design judgment, UX decisions, SEO understanding, deployment experience, and accountability.

But the execution layer gets faster.

For clients, the value is not “we use AI.”

The value is faster diagnosis, faster iteration, better technical coverage, and more time spent on higher-level decisions.

Why freelancers should pay attention too

Freelancers should also watch this closely.

The solo developer with strong AI workflows is becoming more powerful.

A freelancer who knows how to break work into clean agent tasks can operate more like a small team.

One agent can investigate.

One can refactor.

One can write tests.

One can check docs.

One can prepare a first implementation.

The freelancer then reviews, edits, tests, and ships.

This can make solo work much more scalable.

But it can also create a dangerous illusion.

Running five agents does not mean producing five times better work.

It can mean producing five times more code to review.

The best freelancers will not be the ones who blindly generate the most code.

They will be the ones who know when to use agents, how to constrain them, and how to verify the result.

This is not fully autonomous development

It is important not to overhype this.

Agent View does not mean software now builds itself.

Anthropic itself describes the feature as a Research Preview. It also has practical limitations. Background sessions consume usage quota, they run locally, and they stop if the machine sleeps or shuts down.

That matters.

This is not a magical cloud team of autonomous engineers working forever in the background.

It is a developer tool.

A powerful one, but still a tool.

The developer remains responsible for the outcome.

That includes code quality, security, testing, product fit, and deployment.

The better framing is not “AI replaces developers.”

The better framing is “developers are getting a control panel for AI coding labor.”

That is a big deal on its own.

The terminal is becoming a command center

One interesting part of Agent View is that it keeps the workflow close to the terminal.

That matters because serious development still happens close to the codebase.

The browser-based AI chat experience is useful for thinking, writing, and planning.

But coding agents need access to repositories, files, tests, commands, logs, and local context.

The terminal is where much of that lives.

Agent View turns the terminal from a single conversation into something closer to a command center.

You can dispatch work.

You can peek into sessions.

You can reply without fully switching context.

You can attach to a session when deeper interaction is needed.

You can send sessions into the background.

That is exactly the kind of workflow serious developers need if AI agents are going to be used for real production work.

The future is not one giant AI agent

A lot of people imagine the future of software development as one huge AI agent that understands everything and builds the entire product.

Maybe we get closer to that eventually.

But the more realistic near-term future looks different.

Many smaller agents.

Each with a narrow task.

Each working in a controlled environment.

Each producing something the developer can inspect.

That is much more practical.

Software projects are too complex to hand everything to one agent with a vague instruction.

But they are full of smaller jobs that can be delegated.

Fix this failing test.

Explain this error.

Find where this bug starts.

Update this component.

Compare these two approaches.

Check this pull request.

Write a migration draft.

That is where Agent View makes sense.

It is not about removing structure.

It is about making AI work inside a more structured developer workflow.

What businesses should understand

For business owners, the lesson is simple.

AI-assisted development is becoming more operational.

It is no longer just about asking ChatGPT to write a function.

The serious tools are moving toward workflows, agents, background tasks, pull requests, review loops, and multi-agent coordination.

That means software teams may become faster.

But it also means the quality gap between teams may become wider.

A strong technical team using AI agents well can move much faster.

A weak team using AI agents carelessly can create more bugs, more messy code, and more technical debt.

The tool does not remove the need for process.

It increases the importance of process.

Good prompts matter.

Good task definition matters.

Good testing matters.

Good review matters.

Good architecture matters.

The companies that understand this will get more value from AI coding tools than the companies that simply tell their developers to “use AI.”

The real shift

Claude Code Agent View is not just another feature.

It is a signal.

AI coding is becoming parallel.

AI coding is becoming agent-based.

AI coding is becoming more about orchestration than one-off prompting.

For developers, this means the skillset is changing.

Writing code still matters.

But defining work clearly matters more.

Reviewing code matters more.

Testing matters more.

Understanding architecture matters more.

Knowing what not to delegate matters more.

For agencies and freelancers, this is an opportunity.

The teams that learn how to use AI agents without losing quality will be able to deliver faster, explore more options, and handle more technical complexity.

The teams that treat AI agents like magic will create noise.

That is probably the line that separates serious AI-assisted development from hype.

Not how many agents you can run.

But how much trustworthy work you can actually ship.

https://claude.com/blog/agent-view-in-claude-code