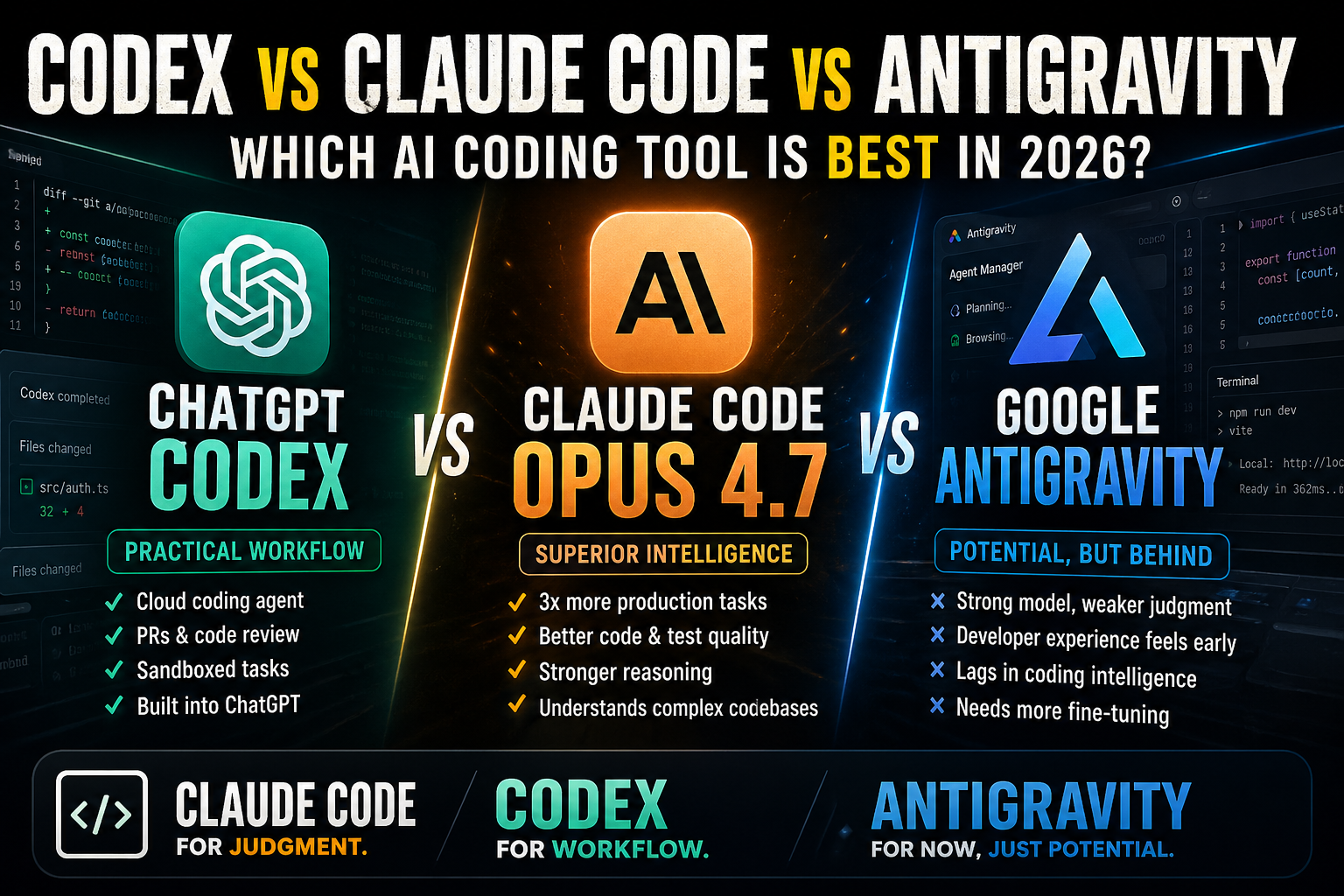

ChatGPT Codex vs Claude Code Opus 4.7 vs Google Antigravity: Which AI Coding Tool Is Best in 2026?

AI coding tools are no longer just autocomplete.

They are becoming coding partners, debugging assistants, architecture reviewers, refactoring agents, and in some cases full software engineering environments.

That changes the question.

A few years ago, the question was:

“Which AI writes the best code snippet?”

In 2026, the better question is:

“Which AI coding tool can actually help me build, understand, debug, refactor, and ship real software without creating a mess?”

Right now, the three most interesting options are ChatGPT Codex, Claude Code with Opus 4.7, and Google Antigravity with Gemini 3.1 Pro.

All three can write code.

All three can help developers move faster.

But they are not equal.

Claude Code with Opus 4.7 feels like the strongest coding brain.

ChatGPT Codex feels like the most practical all-around coding workflow.

Google Antigravity with Gemini 3.1 Pro feels like a strong idea from Google, but still behind in coding intelligence, judgment, and real developer confidence.

And that is the important point.

This is not about saying Gemini is bad.

Gemini 3.1 Pro is not bad. It has strong benchmarks, a huge context window, multimodal input, and Google’s infrastructure behind it. Google’s own Gemini 3.1 Pro model card lists strong coding-related benchmark numbers, including 80.6% on SWE-Bench Verified and 54.2% on SWE-Bench Pro Public.

But coding intelligence is not only about one benchmark.

For real software development, the harder question is:

Can the model understand the actual architecture?

Can it find the real cause of a bug?

Can it avoid unnecessary rewrites?

Can it make safe multi-file changes?

Can it improve code quality without overengineering?

Can it respect the existing project instead of trying to rebuild everything?

Can it behave more like a senior developer and less like a powerful autocomplete system?

That is where Claude Code and Codex still feel ahead.

The quick answer

If I had to simplify it:

Claude Code with Opus 4.7 is best for deep engineering work, hard debugging, architecture reasoning, and production-quality refactoring.

ChatGPT Codex is best for practical day-to-day coding workflows, cloud tasks, feature implementation, pull request work, and developers already living inside the OpenAI ecosystem.

Google Antigravity with Gemini 3.1 Pro is interesting, but I would not choose it as my main coding environment yet.

Not because Google lacks resources.

Google has the model infrastructure, cloud platform, browser, Android, Firebase, Workspace, and massive developer reach.

The problem is that Antigravity and Gemini 3.1 Pro still do not feel like the strongest option for serious coding intelligence.

Before Google ships a more coding-specialized Gemini version, or fine-tunes the Antigravity workflow around real production engineering behavior, I would still put Claude Code and Codex ahead.

Pricing: Gemini is cheaper, Claude is premium, Codex is bundled into a larger workflow

Pricing matters, but it can also be misleading.

Gemini 3.1 Pro looks attractive on paper. Google’s Gemini API pricing lists Gemini 3.1 Pro Preview at $2 per million input tokens and $12 per million output tokens for prompts up to 200k tokens, and $4 input / $18 output above 200k tokens. That is cheaper than Claude Opus 4.7.

Claude Opus 4.7 is priced at $5 per million input tokens and $25 per million output tokens, the same official price as Opus 4.6.

Codex pricing is different because it is tied into ChatGPT plans and Codex usage limits. OpenAI’s Codex pricing page says Pro $100 normally gives 5x Plus usage, but currently gets a temporary 2x launch boost, making it 10x Plus usage through May 31, 2026. Pro $200 includes 20x Plus usage on an ongoing basis, with temporarily higher 5-hour Codex limits at 25x Plus through May 31, 2026.

So the pricing picture looks like this:

Gemini is the cheapest on API pricing.

Claude Opus is the most premium model.

Codex is attractive if you already use ChatGPT and want coding included inside a broader AI workspace.

But the cheapest model is not always the cheapest tool.

If Gemini produces code that needs more correction, misses architectural context, or makes changes that require cleanup, the saved API cost disappears quickly.

For developers, the real cost is not only tokens.

The real cost is time, broken code, wrong assumptions, and debugging the AI’s work.

Coding intelligence: this is where Claude Opus 4.7 wins

Claude Code with Opus 4.7 is the tool I would trust most when the task requires judgment.

That word matters: judgment.

Many models can generate code.

Fewer models can understand whether the code should exist, whether the current architecture supports it, whether the requested fix is actually the right fix, and whether a small change is safer than a large rewrite.

Anthropic’s Opus 4.7 announcement is heavily focused on real engineering performance. Anthropic says Opus 4.7 resolves 3x more production tasks than Opus 4.6 on Rakuten-SWE-Bench, with double-digit gains in Code Quality and Test Quality.

That is exactly the kind of improvement that matters to developers.

Not just “can it write code?”

But:

Can it solve production tasks?

Can it produce better tests?

Can it improve quality?

Can it handle messy, real-world engineering work?

Claude Code with Opus 4.7 feels strongest when the problem is not isolated.

Examples:

A bug spreads across multiple files.

A state management issue is caused by an earlier design decision.

A component works, but the architecture is becoming messy.

A backend rule conflicts with a frontend assumption.

A Firebase permission issue is mixed with client-side logic.

A large refactor needs to preserve behavior.

This is where Claude feels closer to a senior engineering partner.

It does not only produce an answer. It tends to reason more carefully about the surrounding system.

That is why, for hard development work, Claude Code with Opus 4.7 is my first choice.

Codex is not always the sharpest brain, but it may be the best workflow

ChatGPT Codex is strong in a different way.

Claude may still feel stronger for deep coding intelligence, but Codex is becoming extremely practical because of the environment around it.

OpenAI describes Codex as a cloud-based software engineering agent that can write features, answer questions about a codebase, fix bugs, and propose pull requests for review. Each task runs in its own cloud sandbox environment preloaded with the repository.

That is a big deal.

Codex is not just a model that writes code in a chat window.

It is becoming a coding workflow.

You can use it for:

Feature implementation

Bug fixes

Codebase questions

Pull request suggestions

Code review

Parallel coding tasks

Cloud-based work

Local terminal workflows

IDE workflows

OpenAI also has Codex CLI features that let developers work with Codex Cloud from the terminal, browse active or finished tasks, and apply changes to a local project.

This makes Codex especially useful for solo founders, agencies, and teams that want speed.

It may not always feel as thoughtful as Claude Opus 4.7 on a very hard architecture problem, but it is practical.

And practical matters.

If I need to build a landing page section, fix a contained bug, generate a new component, update a form flow, or ask questions about a codebase, Codex is very strong.

Its advantage is not only intelligence.

Its advantage is workflow.

Gemini 3.1 Pro: strong model, weaker coding judgment

This is the part I would be direct about.

Gemini 3.1 Pro is impressive as a general model.

It has strong reasoning benchmarks.

It has a huge context window.

It is multimodal.

It is cheaper than Claude Opus.

It is backed by Google.

But in coding, it still feels behind Claude Opus 4.7 and Codex.

Not necessarily because it cannot produce working code.

It can.

The issue is coding judgment.

Gemini often feels more like a powerful general model that can code, while Claude Opus 4.7 feels more like a model shaped around engineering reasoning.

That difference matters.

A coding model does not only need to know syntax.

It needs to understand tradeoffs.

It needs to know when to make the smallest safe change.

It needs to avoid rewriting working systems.

It needs to infer what the developer actually wants.

It needs to reason about project-specific constraints.

It needs to remember that production code is not a demo.

This is where Gemini still feels less mature.

On Google’s own Gemini Pro comparison page, Gemini 3.1 Pro performs well on SWE-Bench Verified at 80.6%, but the same table shows Codex ahead on the harder SWE-Bench Pro Public number and Terminal-Bench 2.0 self-reported harness number. Gemini 3.1 Pro is listed at 54.2% on SWE-Bench Pro Public, while Codex is listed at 56.8%, and Gemini is listed at 68.5% on Terminal-Bench 2.0 while Codex is listed at 77.3% on the self-reported harness line.

Benchmarks are imperfect, and different harnesses are not always directly comparable.

But the direction matches the real-world experience: Gemini is strong, but not obviously the best coding intelligence tool.

It is competitive on paper.

It is not yet the tool I would trust first for serious production engineering.

Why Antigravity feels behind

Google Antigravity is a good idea.

The concept makes sense: an agentic development platform where AI can work across the editor, terminal, and browser.

Google describes Gemini 3.1 Pro as available in Antigravity, with the model intended for advanced reasoning and complex tasks.

The direction is right.

But the product still feels early compared with Claude Code and Codex.

Claude Code feels closer to the terminal and the real codebase.

Codex feels connected to a broader developer workflow.

Antigravity still feels more like Google proving a concept than Google owning the coding workflow.

The difference is trust.

When I use an AI coding tool, I want to trust that it will:

Inspect the right files

Understand the existing structure

Make minimal safe changes when needed

Know when a change is risky

Explain tradeoffs clearly

Avoid pretending it understands something it does not

Recover well when something fails

Claude Code and Codex are stronger here.

Antigravity may improve quickly, but today I would not choose it first for a complex production refactor.

Before Google fine-tunes a more coding-specialized version, Gemini remains behind

This is the key point.

Google may close the gap.

Actually, it probably will improve fast.

Google has too many advantages not to take seriously: Gemini, Google Cloud, Firebase, Android, Chrome, Workspace, huge infrastructure, and access to developer workflows.

But as of now, Gemini 3.1 Pro still feels like a strong general intelligence model being used for coding.

Claude Opus 4.7 feels more like a model that understands engineering work.

Codex feels like a product built around the software development loop.

Gemini needs either a more coding-specialized version, better fine-tuning for production engineering behavior, or a much more mature Antigravity workflow before I would rank it above Claude Code or Codex.

Until then, I would describe Gemini this way:

Gemini 3.1 Pro is impressive, but it still feels like potential.

Claude Code feels like technical judgment.

Codex feels like practical execution.

Antigravity feels like Google’s early attempt to connect the pieces.

Best use cases for Claude Code with Opus 4.7

Claude Code with Opus 4.7 is best when the problem is expensive to get wrong.

Use it for:

Complex debugging

Large refactors

Architecture review

Production bug analysis

Multi-file reasoning

Security-sensitive changes

Reviewing messy code

Improving test quality

Understanding a large codebase

Making careful changes in mature projects

Claude is the tool I would use when I need the AI to think before it writes.

That is the main difference.

It feels less like “generate code for me” and more like “help me make the right engineering decision.”

Best use cases for ChatGPT Codex

Codex is best when you want speed and workflow.

Use it for:

Building new features

Creating first versions of components

Fixing scoped bugs

Answering codebase questions

Delegating cloud coding tasks

Pull request review

Running parallel implementation tasks

Working inside the OpenAI ecosystem

Codex is especially useful if ChatGPT is already part of your daily work.

For many developers, that is the advantage.

You may already use ChatGPT for planning, research, writing, debugging, and decision-making. Codex brings coding closer to that same environment.

That makes it very practical.

Best use cases for Google Antigravity with Gemini 3.1 Pro

Use Antigravity if you want to test Google’s direction.

Use it for:

Experimenting with Gemini 3.1 Pro

Testing Google’s agentic coding platform

Comparing Gemini against Claude and Codex

Exploring Google Cloud-related workflows

Trying multimodal development tasks

Seeing how Antigravity evolves

But I would not make it the main coding tool yet.

Not for serious production work.

Not for complex refactoring.

Not for architecture-heavy decisions.

Not if I need maximum trust today.

Antigravity is interesting, but still behind.

Which one is best for solo founders?

For solo founders, I would use both Claude Code and Codex.

Claude Code with Opus 4.7 for hard engineering decisions.

Codex for faster implementation and workflow.

That combination is powerful.

Claude helps you think.

Codex helps you move.

Gemini can be useful as a third opinion, especially because it is cheaper and has strong context capabilities. But I would not put it first for the hardest coding work.

If you are building a real SaaS, your biggest risk is not whether the AI can generate code.

Your biggest risk is whether the AI generates the wrong code confidently.

That is why coding judgment matters so much.

Which one is best for agencies?

For agencies, Codex may be the most practical default.

Agency work often includes many scoped development tasks:

Fix this page.

Build this component.

Update this integration.

Explain this old codebase.

Review this pull request.

Create a first version quickly.

For this type of work, Codex is very useful.

But Claude Code should still be the premium option for difficult engineering work.

If a client project has a complicated bug, messy architecture, or a risky migration, I would rather use Claude Code with Opus 4.7.

Antigravity would not be my agency default yet.

Which one is best for enterprise?

Enterprise adoption depends on more than model quality.

It depends on security, permissions, procurement, support, integrations, auditability, and how well the tool fits inside existing engineering workflows.

Codex has a strong enterprise story because it is becoming a full coding agent platform connected to cloud tasks, pull requests, and OpenAI’s broader ecosystem.

Claude Code has a strong enterprise story because the coding intelligence is excellent.

Google should have a strong enterprise story because of Google Cloud and Workspace.

But Antigravity still has to prove itself as a daily developer tool.

Enterprises may trust Google as a platform.

That does not automatically mean developers will trust Antigravity as their main coding agent.

My ranking

For serious coding work in 2026, my ranking is:

Claude Code with Opus 4.7 for coding intelligence, judgment, and deep engineering work

ChatGPT Codex for practical workflow, cloud tasks, implementation speed, and OpenAI ecosystem integration

Google Antigravity with Gemini 3.1 Pro for experimentation, not primary production work

This is not because Gemini is useless.

It is because coding is not only about raw intelligence.

Coding is about taste, context, safety, judgment, consistency, and knowing what not to do.

Claude is strongest there.

Codex is the most practical full workflow.

Gemini still feels like it needs another step.

Final verdict

ChatGPT Codex and Claude Code are the two AI coding tools I would take most seriously today.

Claude Code with Opus 4.7 is the better engineering brain.

Codex is the better all-around coding workflow.

Google Antigravity with Gemini 3.1 Pro is interesting, but still behind in the kind of coding intelligence that matters for production software.

Gemini may catch up after a more coding-specialized version, better fine-tuning, or a more mature Antigravity environment.

But right now, I would not put it above Claude Code or Codex.

If the work is simple, all three can help.

If the work is serious, I would start with Claude Code or Codex.

And if the code really matters, I would still trust Claude Opus 4.7 first.